Preparing for the Rise of Agentic AI in Commerce: Fraud, Identity, and Trust

The digital commerce landscape is evolving at an unprecedented pace, driven not just by human users, but increasingly by AI agents acting on their behalf. During the first Trust Talks virtual panel hosted by Microblink, industry experts explored the opportunities and challenges presented by autonomous artificial agents capable of opening accounts, moving money, and making financial decisions. The panelists included Melisande Mual, Managing Director, The Paypers; Gianmichele Zappia, Head of Risk and Fraud, Getyourguide; Vasileios Konteas, Product Manager, XM; Holly Sandberg, Director, Trust & Safety, Reverb and Microblink’s EVP of Product Albert Roux.

The Emergence of Agentic AI

AI agents are no longer theoretical. Today, consumers can delegate complex tasks like booking travel, managing subscriptions, or even executing financial transactions to software agents powered by increasingly sophisticated AI. These agents navigate websites, fill forms, conduct payments and make decisions based on user-defined rules. For tech-savvy early adopters, this automation saves time and reduces friction, but it also introduces a new frontier of risk that organizations must address proactively.

Panelists highlighted that agentic AI is particularly relevant in industries such as travel and e-commerce, where AI can automate search, comparison, and booking processes. However, the same capabilities that streamline legitimate interactions also create opportunities for misuse. Fraud teams now face a more complex environment: they must distinguish between humans, benign bots, and potentially malicious AI agents. Traditional identity and risk models, which were built around human behavior, are no longer sufficient.

Fraud Prevention in an AI-Driven World

As AI agents become more capable, the potential for large-scale fraudulent activity grows. Fraud teams need to rethink both detection and prevention strategies. Panelists emphasized that velocity analysis, behavioral monitoring, and path tracking are crucial for spotting abnormal activity. For example, a sudden spike in transactions from a single AI agent, or repeated attempts to bypass verification workflows, can indicate misuse.

Preventing fraud before it happens is far more effective than reacting afterward. Organizations must implement monitoring systems that track AI behavior in real time, flagging anomalies that could indicate automated abuse. This involves integrating advanced analytics, behavioral biometrics, and machine learning models trained to differentiate between human and AI patterns.

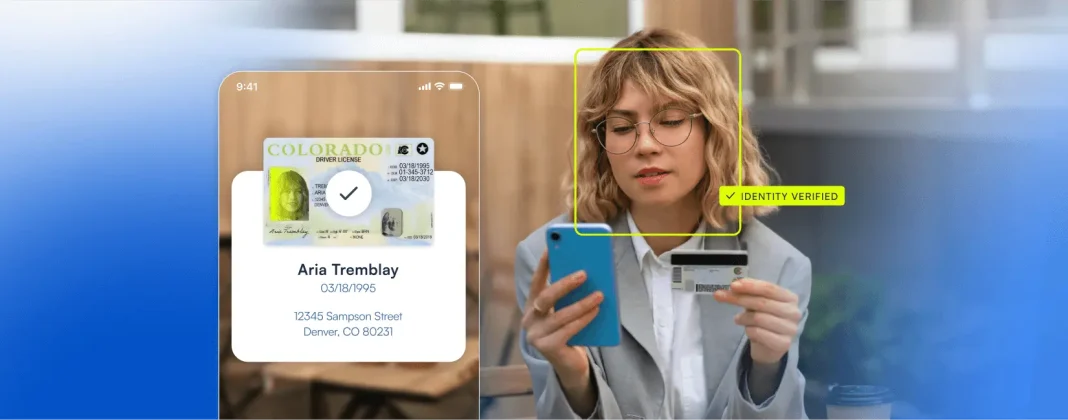

Identity Verification for Autonomous Agents

One of the most critical topics discussed was how to verify the identity of AI agents. Just as humans carry IDs and credentials, AI agents require verifiable digital identities. Without proper identification, businesses risk exposing themselves to fraud, regulatory violations, and reputational damage.

Experts pointed to decentralized identifiers and digital signatures as emerging solutions. These tools allow agents to authenticate themselves in a verifiable, tamper-proof way, while also linking them to a human or organizational owner. This accountability is crucial, particularly in regulated industries such as finance and travel. Technical implementation of agent identity verification is feasible within months, but aligning legal and regulatory frameworks will take longer.

Behavioral Telemetry as a Fraud Signal

Monitoring AI behavior is another vital layer of defense. Panelists discussed the use of behavioral telemetry to track agent interactions across digital platforms. By analyzing patterns of navigation, decision-making, and transaction execution, organizations can detect misuse or model drift, which is the deviation of AI behavior from its intended design.

Behavioral biometrics, which measure unique interaction patterns, can help distinguish between human users, benign automation, and potentially malicious AI agents. This approach is not only about spotting fraud, it’s about maintaining trust in the ecosystem. Organizations must be able to assess intent, enforce compliance with permitted behaviors, and act quickly when anomalies appear.

Trust as the Foundation of Commerce

Underlying all these technical considerations is a broader, philosophical shift: trust becomes the currency of agentic commerce. When autonomous agents operate on behalf of humans, businesses can no longer rely solely on human verification. They must implement a chain of trust that extends from the human owner, through the AI agent, to the transaction itself.

Panelists emphasized that trust is not a static concept, rather it requires continuous validation and oversight. Organizations must establish robust monitoring systems, verification protocols, and accountability structures to maintain confidence in their platforms. In essence, identity, verification, and behavior tracking converge to create a foundation of trust that underpins every transaction involving an AI agent.

Preparing for the Future

The rise of agentic AI is inevitable. Businesses that act proactively to understand and mitigate the associated risks will gain a competitive advantage, while those that ignore the shift may face operational and regulatory headaches. Preparing for this future requires a multi-layered approach:

- Implementing verifiable agent identities to ensure accountability.

- Monitoring AI behavior in real time to detect anomalies and prevent misuse.

- Applying behavioral analytics and biometrics to differentiate between humans and AI agents.

- Establishing robust fraud prevention frameworks that anticipate large-scale automated abuse.

- Fostering a culture of trust that combines technology, policy, and oversight.

As AI agents become more prevalent, these measures will not just protect organizations they will define the standard of trust for the digital economy. For more information on other panels in our Trust Talks series, register today.