What Are Video Deepfake Attacks?

Video deepfake attacks use artificial intelligence to create convincing fake videos that manipulate someone’s appearance, voice, or actions for malicious purposes. These AI-generated videos threaten individuals, businesses, and society by enabling fraud, misinformation, and social engineering attacks. Understanding how these attacks work and how to defend against them is essential for anyone navigating today’s digital landscape.

Understanding Video Deepfake Technology and Its Mechanisms

Video deepfake attacks use advanced artificial intelligence technology, specifically Generative Adversarial Networks (GANs) and machine learning algorithms, to create realistic but fabricated videos. The term « deepfake » combines « deep learning » and « fake, » reflecting the sophisticated AI techniques used to generate these deceptive media files.

The creation process involves training two competing neural networks: a generator that creates fake content and a discriminator that attempts to detect fakes. Through this adversarial process, the generator becomes increasingly sophisticated at creating realistic fake videos that can fool both human observers and detection systems.

How Deepfakes Differ from Traditional Video Manipulation

The following table illustrates how deepfake technology compares to other video manipulation methods:

| Technology Type | Technical Approach | Realism Level | Creation Time | Technical Barrier | Distinguishing Features

|

|---|---|---|---|---|---|

| Traditional Video Editing | Manual frame-by-frame editing | Medium | Hours to days | Medium | Visible editing artifacts, inconsistent lighting |

| Face-Swapping Apps | Template-based overlay | Low to Medium | Minutes | Low | Limited facial expressions, poor edge blending |

| AI-Generated Deepfakes | Neural network synthesis | High to Very High | Hours to weeks | High | Seamless integration, natural expressions |

| Voice Synthesis | Audio cloning algorithms | High | Minutes to hours | Medium | Perfect voice matching, unnatural speech patterns |

| Real-time Deepfakes | Live AI processing | Medium to High | Real-time | Very High | Immediate generation, processing limitations |

Key characteristics that distinguish deepfakes include:

- Pixel-level manipulation: Unlike traditional editing that overlays or cuts footage, deepfakes generate new pixels based on learned patterns

- Facial expression synthesis: Advanced algorithms can create natural-looking expressions and movements

- Voice synchronization: Modern deepfakes can match lip movements to synthesized speech

- Contextual awareness: AI systems can adapt lighting, shadows, and perspective to match the target environment

Attack Vectors and Deployment Methods Used by Threat Actors

Threat actors deploy deepfake videos through various attack vectors, each designed to exploit different vulnerabilities and achieve specific malicious objectives. Understanding these methods helps organizations and individuals recognize potential threats and implement appropriate defenses.

Primary Attack Methods

The following table compares different deepfake attack methods and their characteristics:

| Attack Type | Description | Primary Target | Technical Complexity | Common Use Cases | Detection Difficulty

|

|---|---|---|---|---|---|

| Face-Swap Impersonation | Replacing one person’s face with another’s | Executives, public figures | Medium | CEO fraud, identity theft | Moderate |

| Voice Cloning | Synthesizing someone’s voice patterns | Business leaders, family members | Medium | Phone scams, social engineering | Difficult |

| Full-Body Puppeteering | Controlling entire body movements | Politicians, celebrities | High | Disinformation campaigns | Difficult |

| Real-Time Deepfakes | Live video manipulation during calls | Remote workers, executives | Very High | Video conference fraud | Very Difficult |

| Synthetic Media Creation | Generating entirely fake personas | General public | High | Fake social profiles, catfishing | Moderate |

| Audio-Visual Synchronization | Matching fake audio to real video | News figures, influencers | Medium | Misinformation, reputation damage | Difficult |

Attack Deployment Strategies

Pre-recorded Attacks involve creating deepfake content in advance and distributing it through various channels. Attackers use social media platforms for viral misinformation, email attachments for targeted phishing campaigns, fake news websites to spread disinformation, and dating platforms for romance scams.

Real-time Attacks use live deepfake generation during interactions. These include video conference calls impersonating executives, live streaming platforms for immediate deception, customer service interactions for social engineering, and remote authentication bypass attempts.

Accessibility and Tools

The democratization of deepfake technology has lowered barriers to entry. Consumer-level apps provide simple face-swap applications available on mobile devices. Open-source frameworks like DeepFaceLab and FaceSwap offer free tools. Cloud-based services provide platforms offering deepfake creation as a service. Commercial software delivers professional-grade tools for higher-quality output.

Practical Techniques for Identifying Manipulated Video Content

Detecting deepfake videos requires a combination of visual inspection, behavioral analysis, and technical verification methods. While detection technology continues to evolve, several reliable indicators can help identify manipulated content.

Detection Methods and Indicators

The following table provides a comprehensive guide to deepfake detection approaches:

| Detection Method | What to Look For | Skill Level Required | Reliability | Tools/Resources Needed | Best Used For

|

|---|---|---|---|---|---|

| Visual Inspection | Unnatural eye movement, facial inconsistencies | Beginner | Medium | Human observation | Quick initial assessment |

| Lip-Sync Analysis | Mismatched mouth movements to speech | Beginner | High | Audio-visual comparison | Video calls, speeches |

| Behavioral Analysis | Unusual mannerisms, speech patterns | Intermediate | High | Knowledge of subject | Known individuals |

| Metadata Examination | File properties, creation timestamps | Intermediate | Medium | Technical tools | Forensic investigation |

| AI Detection Platforms | Automated deepfake identification | Beginner | High | Specialized software | Bulk content screening |

| Temporal Consistency | Frame-to-frame irregularities | Advanced | Very High | Video analysis tools | Professional verification |

| Contextual Verification | Cross-referencing with known facts | Beginner | High | Research skills | News verification |

Visual Detection Indicators

Facial Inconsistencies include unnatural blinking patterns or lack of blinking, asymmetrical facial features or expressions, inconsistent skin texture or coloration, and unusual shadows or lighting on the face.

Technical Artifacts appear as blurring around facial edges or hairlines, inconsistent video quality between face and background, flickering or warping effects during movement, and misaligned facial features during speech.

Behavioral and Contextual Clues

Speech and Movement Patterns reveal themselves through uncharacteristic vocabulary or speaking style, robotic or unnatural gestures, inconsistent accent or pronunciation, and unusual pauses or speech rhythm.

Contextual Verification involves cross-referencing with official social media accounts, verifying timing and location claims, checking for corroborating evidence from multiple sources, and confirming through direct communication when possible.

Technical Detection Tools

Consumer-Level Solutions include browser extensions for social media deepfake detection, mobile apps that analyze video authenticity, online platforms offering free deepfake analysis, and reverse image search for face verification.

Professional Detection Platforms provide enterprise-grade AI detection systems, forensic video analysis software, blockchain-based content verification, and multi-modal analysis combining audio and visual detection.

Final Thoughts

Video deepfake attacks represent a rapidly evolving threat that combines sophisticated AI technology with traditional social engineering tactics. The key to protection lies in understanding how these attacks work, recognizing common attack patterns, and implementing layered detection strategies that combine human awareness with technical solutions.

The most effective defense involves staying informed about emerging deepfake techniques, developing critical evaluation skills for digital content, and maintaining healthy skepticism about unexpected or suspicious video communications. Organizations should establish verification protocols for high-stakes decisions and train employees to recognize potential deepfake attacks.

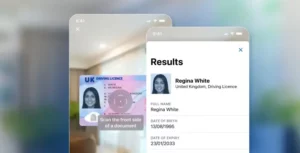

Enterprise-level identity verification platforms demonstrate how these detection techniques are applied in practice, with solutions such as those developed by Microblink incorporating presentation attack detection and comprehensive fraud prevention capabilities. Their 12 years of computer vision R&D expertise illustrates how specialized fraud detection technology addresses these challenges through layered verification methods that combine multiple detection approaches.

As deepfake technology continues to advance, the importance of robust detection capabilities and user education will only increase, making awareness and preparation essential for individuals and organizations alike.