Agentic AI Identity Solution

AI agents and automated actors increasingly operate on behalf of users; traditional point-in-time KYC is no longer sufficient to secure the digital journey. Our Agentic AI Identity solution introduces a « Know Your Actor » framework that continuously distinguishes between human users, legitimate delegated agents, and malicious synthetic threats. By integrating real-time classification with robust policy orchestration, we empower your organization to embrace the agentic future without compromising on fraud prevention or regulatory compliance.

IDs processed every month

countries supported for verifying identities

to capture and extract data

Architecting Trust in the Age of AI Agents

Future-proof your digital security by accurately distinguishing between human users, AI agents, and automated actors. Our Agentic AI Identity solution empowers you to prevent emerging AI-assisted fraud while securely enabling legitimate agent interactions across all customer-facing applications.

Move beyond traditional KYC with our ‘Know Your Actor’ framework, providing continuous identity verification throughout the user journey. This ensures robust governance, compliance, and auditability for every interaction, whether human-initiated or AI-delegated.

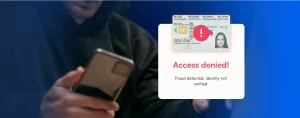

Fortify Against Emerging AI-Powered Fraud

Microblink’s cutting-edge liveness detection and document authentication proactively neutralize sophisticated deepfakes and synthetic identities, which are increasingly powered by AI.

This ensures your customer-facing applications remain secure against evolving AI-assisted fraud vectors and protect your brand’s integrity.

Architect a Future-Ready ‘Know Your Actor’ Framework

Our solution provides the foundational intelligence to precisely differentiate between human users, legitimate AI agents, and malicious automated actors within your customer journeys.

This empowers you to implement dynamic trust policies and maintain compliance in an increasingly agentic digital landscape.

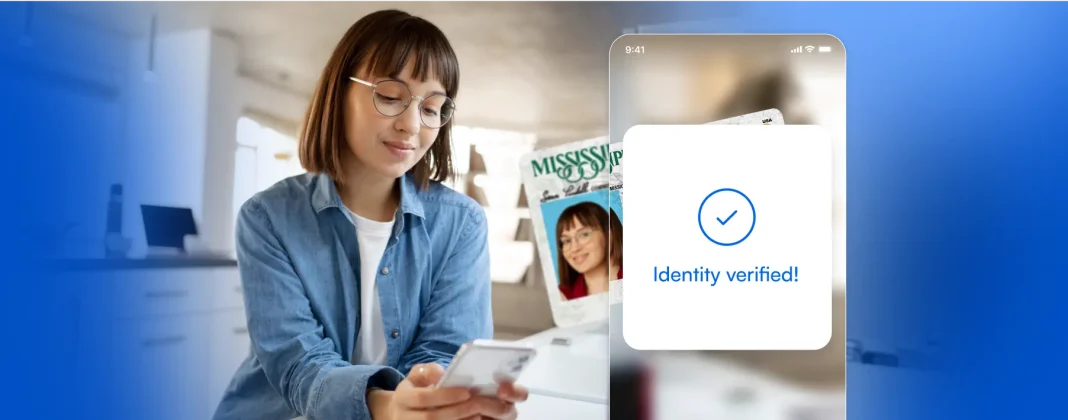

Seamless Integration for Continuous Identity Assurance

Microblink’s robust APIs and SDKs integrate effortlessly into your existing identity infrastructure, enabling continuous verification beyond initial onboarding.

This allows you to build a resilient identity architecture that adapts to the complexities of AI agent interactions and evolving regulatory demands.

Our global customers verify people in 140+ countries

Companies choose Microblink for

Customer Onboarding

Quick and accurate ID verification, ensuring a seamless and secure registration process

KYC/AML Compliance

Meet regulatory requirements with ID document verification and non-documentary signals

Chargeback and Card-Not-Present Fraud

Verify identity and prevent unauthorized transactions through secure document scanning

Stolen Identity/Synthetic Identity Fraud

Detect stolen or synthetic identities with precision and verify IDs to prevent fraudulent account creation and transactions

Age Verification

Ensure compliance and prevent underage access by instantly verifying customer ages through secure ID scanning

The Original Expertise In AI For Identity

Rooted in 12 years of computer vision R&D

With 12 years of expertise in computer vision R&D, Microblink has been at the forefront of AI-driven identity verification, continuously innovating to deliver fast and accurate solutions.

Pioneering the use of AI in identity verification

We pioneered AI-driven identity verification, setting the standard for fast, secure, and accurate ID scanning solutions.

In-house machine learning lab

We develop our AI in-house, using proprietary data and a dedicated team of machine learning specialists to ensure unmatched accuracy and performance in identity verification.

Learn More About Our Solutions

FAQs

Why are our existing KYC and identity verification controls no longer enough once AI agents start interacting with customer journeys?

Because traditional KYC/IDV answers only one question well: was this human identity verified at onboarding? It does not reliably answer who or what is acting now, whether that actor is still under the customer’s control, or whether the current behavior matches legitimate use. That gap becomes dangerous when AI agents, scripted automation, and human-assisted fraud can all operate behind a previously verified account. For a VP of Product Security or Head of Identity, the real issue is not replacing KYC, but extending it into a continuous ‘Know Your Actor’ model. You need controls that classify actor type in real time, detect when behavior shifts from human-led to automated, distinguish delegated automation from hostile bot activity, and apply different policies at login, account changes, payments, withdrawals, and recovery. The pain point is that fraud now happens after onboarding, not just at it.

How can we tell the difference between a legitimate human user, an approved AI agent, and malicious automation without destroying conversion?

You will not solve this with a single signal. The practical approach is layered classification. Start with identity proofing at onboarding, then add session-level signals such as device intelligence, browser and emulator indicators, velocity, navigation patterns, interaction entropy, behavioral biometrics, API usage characteristics, and transaction context. For approved AI-agent use cases, require explicit delegation artifacts such as tokenized authorization, scoped permissions, expiry windows, and action-level constraints. For suspicious automation, look for patterns humans do not sustain well at scale: highly consistent timing, abnormal navigation paths, distributed device reuse, replay behavior, low-friction interaction sequences, or mismatches between claimed identity and observed behavior. The goal is not to challenge everyone equally. It is to risk-tier the journey so low-risk humans pass with minimal friction, known delegated agents operate within policy, and unknown or evasive actors are stepped up, rate-limited, or blocked.

What does a practical 'Know Your Actor' architecture look like in a real customer-facing application?

A workable architecture has five layers. First, identity proofing: document verification, biometrics, database checks, sanctions screening, and any required KYC/KYB controls. Second, actor detection: determine whether the current session appears human-led, bot-driven, agent-assisted, or hybrid. Third, behavioral and contextual risk: evaluate device trust, network reputation, geovelocity, behavioral anomalies, session integrity, transaction intent, and historical account patterns. Fourth, policy orchestration: apply rules and models that decide when to allow, step up, throttle, require re-consent, or escalate to manual review. Fifth, auditability: log what signals were used, how the decision was made, what permissions existed, and why an action was approved or denied. This matters because the problem is rarely missing one control. It is the lack of orchestration between controls. If your teams cannot explain actor classification, delegated authority, and step-up logic at each stage of the journey, then your architecture is still optimized for a human-only world.

How do we allow legitimate AI-agent activity for customers without opening the door to account takeover, unauthorized delegation, or invisible abuse?

Treat delegation as a first-class identity and authorization problem, not a feature add-on. If an AI agent can act for a user, you need to know who authorized it, what it is allowed to do, under what conditions, for how long, and how that authority can be revoked. In practice, that means explicit user consent, scoped permissions, transaction and action limits, strong re-authentication for sensitive delegation events, per-agent credentials or attestations, and clear audit trails linking every agent action back to a verified user and authorization record. You should also separate read access from write access and low-risk actions from high-risk ones. A customer may reasonably allow an agent to gather account data, but not to change payout details or initiate a withdrawal without step-up verification. The pain point here is governance: most teams can imagine useful agent experiences, but they have no durable control model for proving those actions were authorized.

How should we adapt compliance and audit controls when non-human actors are involved in onboarding, account servicing, or transactions?

Regulators still expect accountability, even if the initiating action comes from software. Your control framework should preserve the core compliance outcomes: verified identity, risk-based decisioning, traceability, explainability, and defensible escalation. That means documenting not only who the customer is, but also who or what acted on their behalf, what authority existed, which signals influenced the decision, and whether a human review was triggered. For AML, fraud, and privacy teams, the concern is not whether AI agents are allowed in theory; it is whether you can defend decisions during an audit, dispute, SAR review, or customer complaint. Build for evidence retention, decision logs, consent records, policy versioning, and action-level histories. If an agent changes account information, moves funds, or submits onboarding data, you should be able to reconstruct the event chain without ambiguity. Compliance breaks when identity, authority, and action history are disconnected.

What should trigger step-up verification when an AI-assisted or automated actor is present?

Step-up should be driven by a combination of actor uncertainty, action sensitivity, and anomaly severity. Trigger it when the actor classification is unclear, when behavior suddenly shifts from normal human usage to automation-like patterns, when a trusted account attempts a high-risk action from a new environment, or when a delegated agent exceeds its normal scope or velocity. Sensitive moments usually include account recovery, password or MFA resets, payout changes, beneficiary additions, large transfers, crypto withdrawals, tax or profile changes, and anything that could irreversibly alter ownership or funds flow. The mistake many teams make is treating authentication as binary: logged in or not. In agentic environments, step-up should be contextual and progressive. You may require passive checks first, then biometric re-verification, then explicit user approval, then human review if the risk remains unresolved. The right model protects critical actions without forcing every customer through the same heavy flow.