Stop Synthetic Media Attacks That Bypass Traditional Identity Verification

Synthetic media attacks are no longer a theoretical risk or a future problem. They are actively being used to bypass identity verification systems, impersonate real individuals, and gain access to financial accounts, services, and sensitive data. As generative AI tools become cheaper and more accessible, the quality of fake images, videos, and audio has reached a point where traditional verification methods struggle to tell real from artificial.

For risk management specialists, this shift introduces a new kind of uncertainty. Many existing identity controls were built to detect stolen credentials or simple document fraud, not AI-generated faces or manipulated video streams. Understanding how synthetic media attacks work, and how to defend against them, is now a core requirement for modern fraud prevention strategies.

What Are Synthetic Media Attacks?

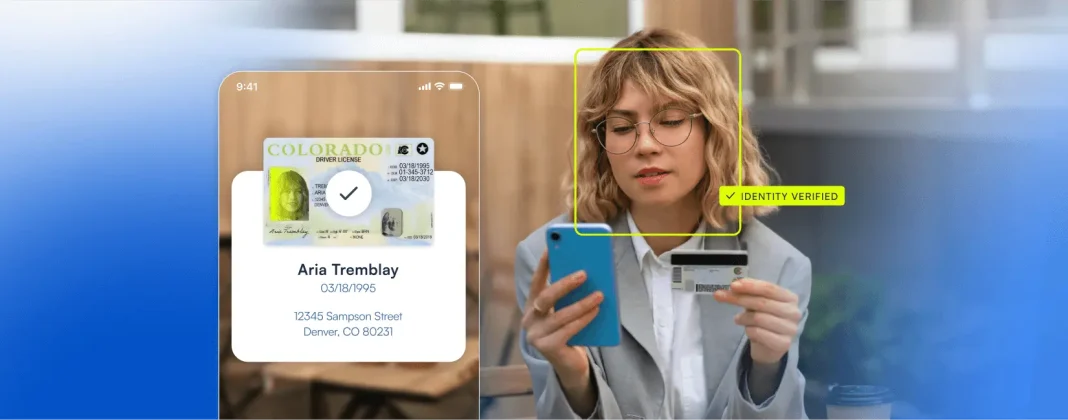

Synthetic media attacks use artificially generated or manipulated media to deceive people or automated systems. This includes deepfake videos, AI-generated selfies, cloned voices, and manipulated images designed to impersonate real individuals during identity verification or authentication.

In fraud scenarios, these attacks are often used during customer onboarding, account recovery, or step-up verification. A convincing synthetic face or video can pass basic biometric checks, allowing fraudsters to create synthetic identities or take over legitimate accounts. As the technology improves, these attacks are becoming harder to detect using legacy tools.

How Synthetic Media Attacks Bypass Traditional Identity Verification

Traditional identity verification systems were designed for a different era. Many rely on static checks, basic facial matching, or human review of images and videos. Synthetic media exploits these weaknesses by presenting media that looks authentic at a surface level but is entirely artificial underneath.

For example, a fraudster can generate a realistic selfie that matches a stolen ID photo closely enough to pass basic face matching. Others use pre-recorded or manipulated videos to defeat liveness checks that only look for simple movements or gestures. Without deeper analysis, these systems treat synthetic inputs as genuine.

This is why synthetic media attacks are so dangerous. They do not break the rules. They exploit assumptions baked into older verification models.

Common Types of Synthetic Media Attacks Used in Fraud

The most common synthetic media attacks involve deepfake imagery and video used during onboarding or re-verification. Fraudsters generate faces that do not belong to real people or alter existing faces to match stolen identity data.

Audio deepfakes are also gaining traction, particularly in social engineering and account recovery scenarios. Cloned voices can be used to impersonate customers or executives, convincing support teams to override controls.

More advanced attacks combine multiple media types. A synthetic identity may include a forged document, an AI-generated selfie, and manipulated behavioral signals, all designed to reinforce the illusion of legitimacy. These blended attacks are especially difficult to detect without layered defenses.

The Business and Compliance Risks of Synthetic Media Attacks

The impact of synthetic media attacks goes beyond individual fraud losses. Successfully onboarded synthetic identities can be used for money laundering, payment fraud, or large-scale abuse that triggers regulatory scrutiny.

From a compliance perspective, failing to detect these attacks increases the risk of KYC and AML violations. Regulators increasingly expect organizations to understand emerging threats and adapt their controls accordingly. Relying on outdated verification methods can be interpreted as a failure to maintain effective safeguards.

Reputational damage is another major concern. Customers lose trust quickly when they learn that sophisticated fraud has slipped through systems meant to protect them. Recovering that trust is often more costly than the fraud itself.

Why Detection Requires a Layered Defense Strategy

There is no single signal that reliably detects synthetic media attacks. Effective defense requires layered controls that analyze biometric data, media authenticity, and contextual risk signals together.

Advanced face biometric analysis plays a central role. Systems must be able to detect subtle artifacts introduced by AI generation, not just match facial features. Liveness detection must go beyond basic motion checks to confirm that a real, present human is interacting with the system.

Microblink’s identity verification platform addresses this challenge with advanced face biometric checks, including iBeta Level 2 Presentation Attack Detection and robust liveness detection. These capabilities are specifically designed to identify spoofing attempts, deepfakes, and other synthetic media attacks that evade traditional controls.

Evaluating Solutions Designed to Stop Synthetic Media Attacks

Not all identity verification solutions are built to handle synthetic media threats. Risk leaders should evaluate whether vendors have explicitly designed their biometric systems to detect AI-generated attacks, not just legacy spoofing techniques.

The table below highlights key criteria to assess when evaluating defenses against synthetic media attacks.

| Evaluation Criteria | What to Look For | Why It Matters |

|---|---|---|

| Biometric Depth | Advanced face analysis beyond simple matching | Detects AI-generated and manipulated faces |

| Liveness Detection | Active and passive liveness with spoof resistance | Prevents replay and deepfake attacks |

| Presentation Attack Detection | Certified PAD testing such as iBeta Level 2 | Validates resistance to advanced attacks |

| Signal Layering | Biometrics combined with document and risk signals | Reduces reliance on any single control |

| Adaptability | Models updated to reflect emerging attack methods | Keeps defenses current as AI evolves |

| Compliance Alignment | Audit-ready outputs and consistent decisioning | Supports regulatory expectations |

Protecting Your Organization and Your Customers

Synthetic media attacks are not a passing fraud trend. They are a direct consequence of rapid advances in generative AI, and they will continue to evolve. Organizations that rely on static or surface-level verification will find themselves increasingly exposed.

Protecting against these attacks requires acknowledging that identity verification is now an adversarial problem. Fraudsters actively test systems and adapt when they find weaknesses. Defense must be just as adaptive, combining advanced biometrics, liveness detection, and continuous improvement.

Microblink’s platform was built with this reality in mind. By delivering advanced biometric defenses as part of a broader identity verification strategy, it helps organizations stay ahead of synthetic media attacks while maintaining strong compliance and a smooth customer experience. If you’d like to learn more, get in touch today.