Making AI Trustworthy: How to Build Systems People Can Rely On

A conversation with Joshua Weisberg, CEO of Lambda Finance

Today, we’re speaking with Joshua Weisberg, CEO of Lambda Finance, the top-rated investment research platform trusted by active traders and investors navigating complex, fast-moving markets. This conversation is part of Microblink’s ongoing exploration of how trusted AI systems are built and operated in high-stakes environments, where accuracy, explainability, and reliability are non-negotiable.

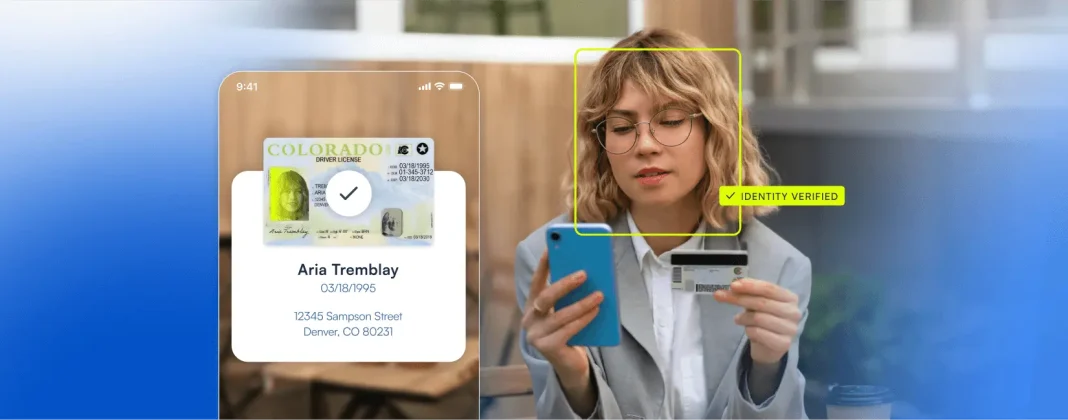

As a global leader in identity intelligence, Microblink works with regulated organizations across finance, mobility, and digital platforms to automate critical decisions without sacrificing trust. As AI becomes central to high-impact workflows, a shared challenge emerges across industries: how do you design systems people can rely on when the margin for error is razor-thin?

With years of experience developing AI-driven research tools used daily in high-risk financial environments, Joshua brings a practitioner’s perspective on trust, accuracy, and scalability. His insights extend well beyond finance, offering practical lessons for any organization building AI systems that must perform consistently, transparently, and at scale.

—-

Microblink: In high-stakes environments, whether verifying identities or making investment decisions, trust isn’t optional. What does it actually take to build AI systems that users trust when the cost of being wrong is high?

Joshua Weisberg: Trust starts with consistency and explainability. Users don’t expect perfection, but they do expect dependability. In finance, a single misleading signal can trigger real monetary consequences—just like a false negative or false positive creates risk in identity verification.

At Lambda Finance, we’ve learned that trust is earned when users understand why a system surfaces a particular insight and when results behave predictably across scenarios. That means grounding AI outputs in verified data, validating them continuously, and prioritizing signal quality over volume. Confidence comes from knowing the system has been stress-tested in real-world conditions—not just ideal ones.

—–

Microblink: Both fraud prevention and market analysis face the same challenge: separating meaningful insights from overwhelming noise. How do you approach data filtering and prioritization when designing AI systems that operate in real time?

Joshua Weisberg: Noise is inevitable in complex systems. The mistake many platforms make is assuming more data automatically leads to better outcomes. In reality, high-volume data can actually degrade decision-making if it’s not properly filtered and contextualized.

At Lambda Finance, we focus on identifying which signals are actionable versus merely interesting. That means combining multiple data sources, cross-validating them, and using AI to highlight relationships, not just raw metrics. The goal isn’t to overwhelm users with information, but to reduce cognitive load so they can focus on what actually matters in the moment. In high-stakes environments, clarity beats volume every time.

—-

Microblink: Many AI tools deliver outputs, but not necessarily confidence. What separates systems that simply surface information from those that meaningfully support human decision-making?

Joshua Weisberg: The difference is context. Systems that only surface information force users to do the hard work of interpretation themselves. Decision-oriented systems help users understand why something matters and how it fits into the bigger picture.

That’s where integrated platforms have a major advantage. When data, analysis, and interpretation exist in the same environment, AI can connect dots in ways that feel intuitive rather than opaque. Confidence comes when users feel the system is working with them, guiding attention, reducing uncertainty, and supporting judgment rather than replacing it. The best AI systems amplify human decision-making instead of obscuring it.

—–

Microblink: Many organizations rely on disconnected tools to solve complex problems. From your experience, what breaks first when systems aren’t designed to work together, and what does a truly unified platform enable?

Joshua Weisberg: Fragmentation breaks trust faster than almost anything else. When users have to jump between tools, reconcile conflicting data, or manually stitch insights together, confidence erodes quickly. You start questioning which source is correct, or whether any of them are.

Unified platforms solve this by creating a single source of truth. When tools are built to work together natively, AI can reason across datasets instead of operating in silos. That doesn’t just improve accuracy, it dramatically improves speed and usability. In high-stakes environments, that cohesion is what allows users to act decisively instead of hesitating. Integration isn’t a luxury; it’s a requirement for operational certainty.

—-

Microblink: Looking ahead, how do you see AI-driven decision systems evolving in industries where accuracy, speed, and trust all matter simultaneously? What will separate the platforms that endure from those that don’t?

Joshua Weisberg: The platforms that endure will be the ones designed for reality, not demos. That means handling edge cases, adversarial behavior, and imperfect data without breaking down. It also means being transparent about limitations and continuously improving based on real usage.

AI systems that earn long-term trust won’t just be faster or more powerful, they’ll be more reliable. They’ll help users understand risk, not obscure it. Whether in finance, identity verification, or fraud prevention, the winners will be platforms that deliver clarity under pressure and confidence at scale. Trust isn’t built on promises, it’s built on consistent performance when it matters most.