Fraud Forum Recap: Lessons from a Candid Industry Conversation on Identity and AI

This week, Microblink hosted a group of fraud, risk, and identity leaders for something intentionally different. A gathering designed to be an honest, closed-door conversation about where fraud is headed and what the industry must do next.

The Fraud Forum was created to be conversational rather than performative, creating space for open dialogue across disciplines and industries. The agenda moved from big-picture framing to deeply technical discussions, blending keynote perspectives, trend analysis, roundtable debates, and live demonstrations. Here are some of the big takeaways from the event.

Fraud Is Scaling Faster Than Defenses

A central theme emerged early: fraud has entered an era of industrial scale. Attacks that once required specialized skills, tooling, and coordination are now accessible to almost anyone, powered by generative AI, automation, and global criminal infrastructure.

One area of focus was on the rapid rise of highly sophisticated investment and relationship scams. These operations are no longer opportunistic or amateur. They are patient, psychologically precise, and devastatingly effective. Victims are not defined by age or technical literacy. They include highly educated professionals who lose life savings after months of carefully orchestrated manipulation.

What makes these scams especially dangerous is their veneer of legitimacy. Victims are often guided through real platforms, real exchanges, and real financial workflows before being diverted into entirely fabricated environments designed to extract maximum value. By the time the fraud is discovered, the damage is total.

Behind these scams is a transnational ecosystem that blends organized crime, human trafficking, and platform abuse. Entire compounds exist solely to run fraud at scale, staffed by coerced workers and fueled by advertising and communication channels that remain profitable despite widespread abuse.

The Failure Patterns Holding the Industry Back

Another discussion reframed fraud risk not just as a technology problem, but as an organizational one. Several recurring failure patterns were identified, including over-investing in disconnected tools, relying on outdated controls, operating in silos, and assuming fraud can be solved by any single layer of defense.

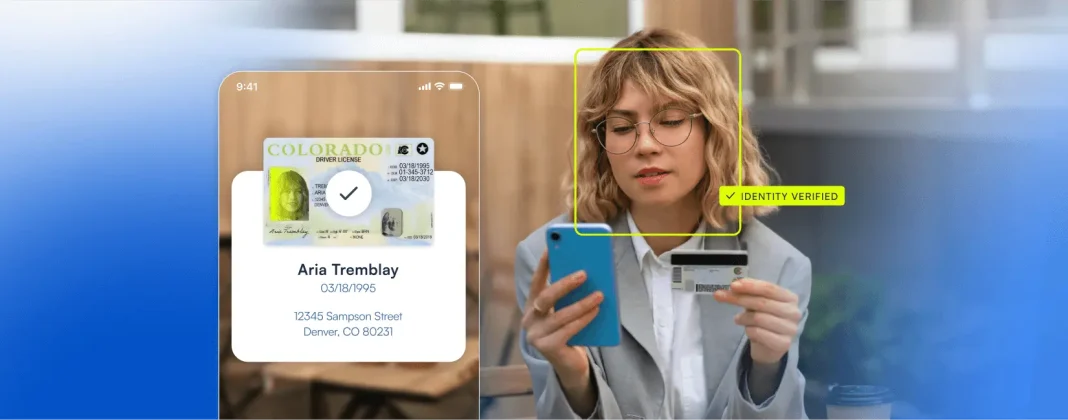

At the core of these failures is a shared misconception: that identity verification is a point-in-time event. In reality, identity is a continuous signal, one that must be assessed across documents, biometrics, devices, behavior, and transactions. Static checks cannot keep pace with adaptive, AI-driven attackers.

Agentic AI Changes the Threat Model Entirely

As the conversation moved forward, attention shifted to what may be the next major inflection point: agentic AI.

AI agents are no longer theoretical. Systems already exist that can search, decide, transact, and optimize outcomes with minimal or no human involvement. As commerce, discovery, and payments increasingly move into agent-driven workflows, traditional assumptions about intent, accountability, and risk break down.

In an agentic world, risk begins earlier, at the moment of intent or discovery. Transactions may happen outside merchant-controlled environments. Agents may act across devices, identities, and platforms. Fraud models built around emails, IPs, or historical behavior struggle to apply when agents can be created, discarded, and reconstituted instantly.

This shift raises difficult questions. Who is liable when an agent makes a bad decision? How do you authenticate an agent rather than a human? How do merchants remain visible when discovery itself is intermediated by AI systems with opaque ranking logic?

Emerging protocols aim to address these gaps by standardizing how agents communicate with merchants, each other, and payment systems. Their adoption may determine which businesses remain discoverable and which are quietly bypassed.

Deepfakes Are Eroding Trust in Core Identity Signals

Perhaps the most sobering moments came during live demonstrations of generative AI-driven identity fraud. Fully synthetic identity documents, images, and biometric artifacts can now be produced in seconds, often tailored with specific personal data to target known verification weaknesses.

These are not crude forgeries. They replicate material properties, visual depth, and behavioral cues well enough to defeat systems that rely on isolated signals like liveness checks or document appearance alone. At scale, even a small failure rate becomes exploitable when attackers can generate thousands of attempts at near-zero cost.

The implication is stark: trust in traditional identity artifacts is degrading. Detection must evolve from spotting obvious fakes to identifying subtle inconsistencies across space, time, and signal correlation.

Fraud Is a User Experience Problem

One of the most important conclusions was also one of the simplest. Fraud prevention and user experience are not opposing forces. Poorly designed friction drives abandonment, encourages rule relaxation, and ultimately increases risk. Thoughtful experience design, aligned with adaptive risk controls, protects both revenue and users.

This reframing challenges organizations to rethink how fraud teams are structured, how success is measured, and who is involved in designing defenses. In a world of AI-driven fraud, usability is not a luxury. It is a control surface.

A Shared Responsibility Going Forward

The Fraud Forum closed not with definitive answers, but with clarity about what comes next. Fraud is no longer confined to edge cases or isolated attacks. It is systemic, adaptive, and accelerating. Addressing it will require collaboration across industries, pressure on institutions that control identity data, and a willingness to rethink long-held assumptions about trust online.