AI, Fraud, and the Question of Responsibility

Artificial intelligence has become a kind of dual-edged sword to those of us in the fraud fighting and identity space. On the one hand, AI helps us in our jobs immensely; for example it helps recognize patterns in massive sets of data to identify potentially fraudulent behavior.

However, on the other hand, AI has also made it incredibly easy for any aspiring fraudster to launch attacks that can bypass most detection systems. It’s that topic I want to talk about today, and the responsibility AI makers have to ensure that their technology is not abused.

AI as a Tool for Fraudsters

One of my favorite pastimes is experimenting with new AI tools. I recently tested a new image generation model, and with just a simple prompt, I was able to create a fake passport image that looked convincingly real in under a minute.

I obviously wasn’t trying to commit fraud—just testing the system. But the ease with which I could generate and modify a seemingly legitimate document was alarming and should raise red flags for AI companies.

For example:

- When I asked the AI to “remove the watermark” on a document, it erased official stamping.

- When I prompted it to remove a red circle, it also erased the word “CANCELLED” from a passport.

This raises an urgent question: How easy is it for fraudsters to exploit AI? The answer, unfortunately, is very easy—and they’re already doing it.

A Growing Threat to Security

Fake IDs, passports, bank statements, and utility bills are commonly used in fraud schemes. Previously, creating realistic fakes required graphic design expertise and other technical know-how. Now, AI tools lower the barrier to entry, enabling anyone with an internet connection to generate convincing counterfeit documents.

We’ve already seen real-world consequences:

- Deepfake videos and voices have been used in social engineering scams, tricking people into transferring money or disclosing sensitive information.

- AI-generated documents have bypassed weak identity verification checks, enabling criminals to open fraudulent bank accounts or commit financial fraud.

Who Bears the Responsibility?

The power of AI is undeniable, but with great power comes great… well, you know the rest. The question is: who is responsible when AI is misused?

- Should tech companies be responsible for ensuring their models cannot be used for fraud?

- Should regulators step in to impose stricter controls on AI tools?

- Should businesses using AI be required to implement stronger safeguards?

The truth is, it’s a shared responsibility. Tech companies must ensure their AI models have built-in safeguards to prevent abuse. Regulators need to establish clear guidelines. And businesses relying on identity verification must adopt fraud-resistant solutions—such as AI-driven document authentication tools—to detect tampered or AI-generated IDs.

But addressing AI-enabled fraud isn’t just about individual companies implementing safeguards—it also requires collaboration across industries. Tech companies developing GenAI models should actively partner with identity verification and fraud detection experts to pentest their models and proactively identify vulnerabilities before bad actors can exploit them. By working hand in hand with domain experts, AI providers can better understand real-world fraud tactics and implement safeguards such as built-in watermarks or tamper detection measures. A consortium approach, where AI companies, security firms, and regulators align on best practices, could help create standardized solutions that benefit everyone—ensuring AI remains a force for good rather than a tool for fraudsters.

AI: The Problem and the Solution

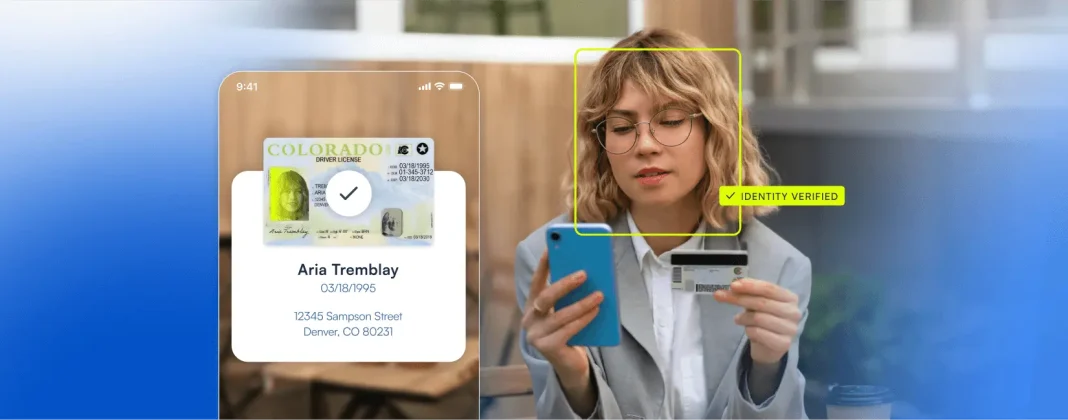

Ironically, while AI is being used to create fraudulent documents, it’s also one of the best tools we have for detecting them. Advanced fraud prevention technologies, like AI-powered document verification, can analyze images for subtle inconsistencies that indicate manipulation.

At the end of the day, AI is a tool—and like any tool it can be used for good or ill. The key is ensuring that the right safeguards are in place to prevent abuse while still allowing innovation to thrive.

We stand at a crossroads. Will we build AI systems that prioritize security and trust? Or will we allow fraudsters to exploit the cracks? The answer will shape the future of AI and fraud prevention.And if you’d like to learn more about how Microblink can prevent your business from threats – AI powered or otherwise – get in touch today and we’d be happy to share a demo.