Deepfake Agentic AI Fraud: Why Your Current Identity Verification Fails

Artificial intelligence is reshaping digital commerce at an unprecedented pace. While generative AI tools have already enabled fraudsters to create convincing fake identities and biometric data, the next stage of this evolution is emerging: deepfake agentic AI. In this model, autonomous AI agents equipped with synthetic identities can interact with platforms, initiate transactions, and bypass verification systems at scale.

For risk management specialists, this shift represents a significant escalation in identity fraud threats. Traditional fraud prevention systems were designed to detect suspicious human behavior. Deepfake agentic AI introduces a new class of threats where automated systems can simulate human identity signals, manipulate biometric verification processes, and interact with onboarding workflows without direct human involvement.

Understanding how deepfake agentic AI operates—and how to defend against it—is now critical for organizations responsible for protecting digital trust.

How Deepfake Agentic AI Enables Synthetic Identity Fraud

Deepfake technology has already proven capable of generating highly convincing images, video, and audio that mimic real individuals. When combined with agentic AI systems that can autonomously perform tasks, the result is a powerful fraud mechanism capable of orchestrating identity attacks with minimal human input.

Fraudsters can generate realistic facial images or videos that pass basic biometric checks during identity verification processes. These synthetic identities can then be paired with stolen personal data obtained from breaches to create fully formed digital identities. Once deployed, agentic AI can automate actions such as opening accounts, completing verification flows, initiating transactions, or interacting with customer support systems.

Because these agents operate continuously and at machine speed, they allow fraud campaigns to scale dramatically. What once required manual coordination by organized fraud rings can now be executed automatically by AI-driven systems capable of interacting with digital platforms around the clock.

The risk is not limited to financial services. E-commerce platforms, gaming networks, travel services, and digital marketplaces are all vulnerable to these automated identity attacks.

Why Deepfake Agentic AI Is Difficult to Detect

Detecting deepfake agentic AI attacks presents unique challenges for existing fraud detection systems. Many identity verification tools rely on static signals such as document authenticity checks, facial comparison, or knowledge-based authentication questions. While these methods may detect simple impersonation attempts, they are often insufficient against sophisticated AI-generated identities.

Deepfake generation models continue to improve rapidly, producing faces and voice samples that closely replicate real biometric traits. These systems can simulate natural movements, facial expressions, and speech patterns that defeat basic liveness detection techniques. When paired with automation frameworks, agentic AI can repeatedly attempt verification processes until it finds weaknesses in the system.

Another challenge is that fraud detection models often rely on historical behavioral patterns. Agentic AI can mimic legitimate customer behavior patterns while executing fraudulent activity, making anomaly detection more difficult. In many cases, fraud does not appear suspicious until after an account has already been established and transactions have begun.

The result is a threat environment where fraudsters can test and adapt their tactics quickly, exploiting gaps in identity verification systems faster than traditional security controls can respond.

Detection Methods for Deepfake Agentic AI Attacks

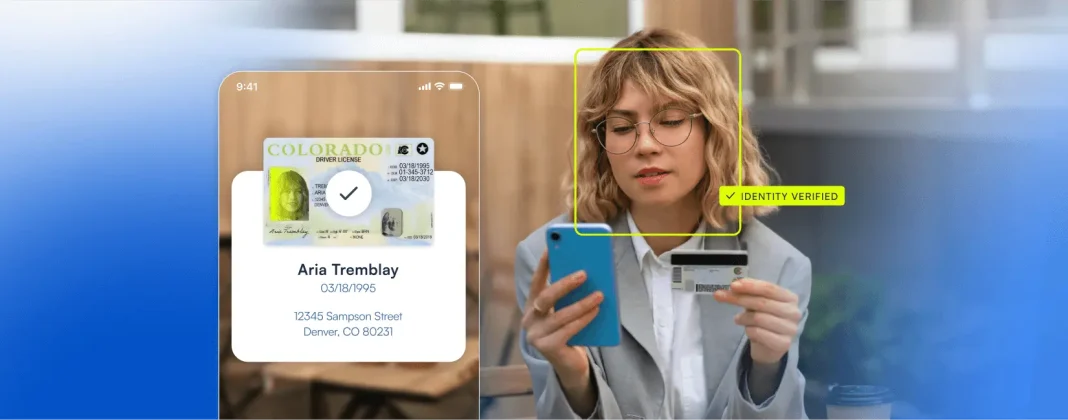

To defend against deepfake agentic AI fraud, organizations must deploy identity verification systems capable of analyzing biometric signals at a deeper level while incorporating multiple layers of fraud detection.

Modern detection techniques include advanced liveness verification methods that evaluate subtle facial movements, micro-expressions, and environmental cues that are difficult for AI-generated media to replicate. Voice authentication systems can analyze vocal biometrics to detect anomalies introduced by synthetic speech generation tools.

In addition to biometric signals, organizations increasingly rely on behavioral analytics and device intelligence to detect suspicious patterns of interaction. For example, automated agents often exhibit subtle differences in interaction timing, navigation patterns, or device configurations that distinguish them from legitimate users.

Combining these signals creates a more resilient defense system that evaluates identity across multiple dimensions rather than relying on a single verification step.

Framework for Defending Against Deepfake Agentic AI

Protecting digital platforms from deepfake agentic AI requires a layered identity verification strategy that combines biometric analysis, machine learning, and continuous risk monitoring.

| Defense Layer | Purpose | Example Capabilities |

|---|---|---|

| Advanced Liveness Detection | Confirms that a real human is present during biometric verification | Micro-expression analysis, motion detection, environmental consistency checks |

| Document Authentication | Verifies government-issued identity documents and detects manipulation | Optical character recognition, hologram validation, AI-based forgery detection |

| Behavioral Analytics | Identifies abnormal interaction patterns associated with automated agents | Interaction timing analysis, session behavior monitoring |

| Machine Learning Risk Scoring | Evaluates identity signals in real time to determine risk level | Adaptive fraud models, anomaly detection algorithms |

| Continuous Authentication | Monitors identity signals beyond onboarding to detect suspicious activity | Device fingerprinting, transaction monitoring |

By layering these defenses, organizations can significantly reduce the likelihood that synthetic identities or deepfake-generated biometrics will successfully bypass verification systems.

Maintaining Compliance Without Sacrificing User Experience

As deepfake threats grow more sophisticated, businesses face increasing pressure to strengthen identity verification processes while maintaining a seamless customer experience. Regulatory requirements such as Know Your Customer (KYC) and Anti-Money Laundering (AML) mandates require organizations to implement strong identity validation controls, yet excessive friction during onboarding can drive away legitimate users.

The key is implementing intelligent verification workflows that dynamically adjust the level of security based on risk signals. Low-risk users can move through verification quickly with minimal friction, while suspicious cases trigger additional verification steps such as biometric checks or document validation.

This adaptive approach allows organizations to meet compliance requirements while preserving a smooth onboarding experience for legitimate customers. Automated identity verification platforms play a critical role in enabling this balance by analyzing identity signals in real time and applying risk-based decisioning.

Machine Learning and Continuous Authentication

Fraud prevention strategies must evolve continuously to keep pace with deepfake agentic AI. Machine learning models trained on large datasets of legitimate and fraudulent identity interactions can detect subtle patterns that traditional rule-based systems miss.

These models can analyze signals such as document characteristics, biometric behavior, device attributes, and interaction timing to identify emerging fraud techniques. Over time, continuous model training allows systems to adapt as fraudsters introduce new deepfake generation methods.

Continuous authentication also extends identity verification beyond the initial onboarding step. Instead of verifying identity once and assuming trust indefinitely, modern systems monitor identity signals throughout the user lifecycle. This approach helps detect compromised accounts, synthetic identities, or automated agents attempting to exploit established accounts.

The Future of Identity Fraud Defense

Deepfake agentic AI represents a new frontier in identity fraud. Autonomous agents equipped with synthetic identities have the potential to exploit weaknesses in digital onboarding systems at a scale previously unimaginable.

For businesses operating in highly regulated industries, defending against these threats requires more than incremental improvements to existing fraud detection tools. It requires a comprehensive identity verification strategy that combines advanced biometrics, machine learning analysis, behavioral intelligence, and continuous authentication.

Microblink’s identity verification technology is designed to help organizations address these emerging threats by delivering high-accuracy document verification, advanced biometric analysis, and real-time risk signals that detect fraudulent identities before they can compromise digital platforms.

As AI-driven fraud continues to evolve, organizations that invest in adaptive identity intelligence systems will be best positioned to protect both their customers and their businesses.