Knowing Who — or What — We’re Talking To in an Age of Autonomous AI

Moltbook, the Reddit-style social platform designed for AI agents to participate, already exceeds 1.5 million users. Humans are watching what happens with curiosity and concern.

AI systems are no longer just tools responding to prompts. They initiate actions, interact with other systems, and adapt their behavior with increasing autonomy. Some are creating business relationships with each other, just like humans do. That shift brings extraordinary possibility and a pressing question we’re all starting to ask:

How do we know who, or what, we’re actually interacting with?

This isn’t about fear of AI. Autonomy isn’t inherently dangerous. In many cases, it’s exactly what enables efficiency, scale, and entirely new forms of value. But autonomy does change the nature of trust. When systems can act independently, trust can no longer be assumed based on a single moment of verification or a static identity.

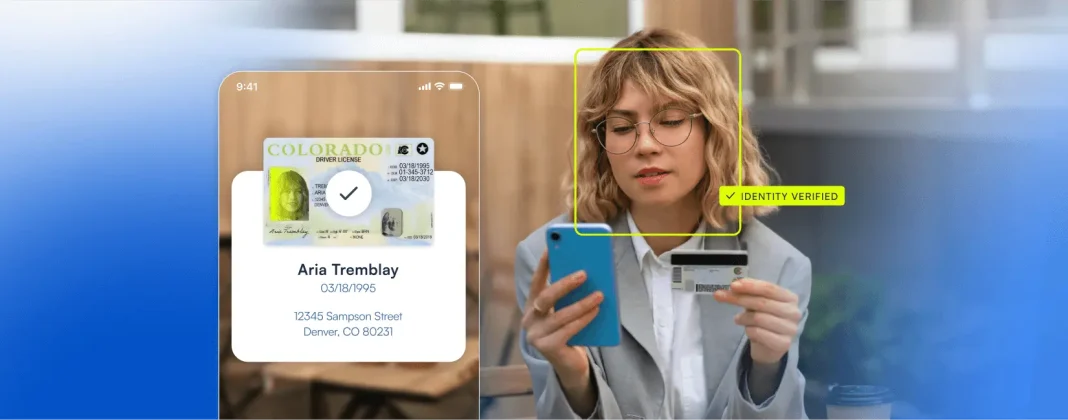

For decades, digital identity was built around a human showing up, proving who they are, and proceeding to their task. That model still matters, but it no longer describes reality. Today, humans, bots, and AI-driven agents coexist within the same digital environments. Sometimes they collaborate. Sometimes they impersonate. Sometimes they blend in ways that are hard to distinguish.

The risk isn’t that AI exists. The risk is ambiguity. Systems can’t tell the difference between legitimate automation and abuse and between intent and imitation. That ambiguity is exactly where fraud thrives.

What I’m seeing is an emerging shift in how teams think about identity. Less focus on identity as a fixed attribute, and more focus on actors. How do we determine who or what is acting in a given moment? How does behavior change over time, and when should trust be re-evaluated?

Microblink refers to this mindset as Know Your Actor. Not as a replacement for identity verification, but as an evolution of it. Identity tells you who someone claims to be. Understanding the actor tells you how trust should function in real time, especially when autonomy is involved.

The path forward isn’t about locking systems down or distrusting automation by default. It’s about designing shared technical, ethical and collaborative rules of engagement so humans and AI can operate together without creating blind spots that bad actors exploit.

Engineers, operators, policymakers, and technologists should be openly working through uncertainty so trust can evolve through collective clarity. Microblink’s fraud lab is tackling this challenge everyday. We’re constantly learning and evolving to stay in-step with the way humans and bots are using this sophisticated new tech to quickly mimic identity.

The future won’t ask us to choose between humans and AI. It will ask us to understand both and to know who we’re really talking to.